Deconstructing the Modern Big Data Analytics Market Platform

The Architectural Core of a Big Data Analytics Market Platform

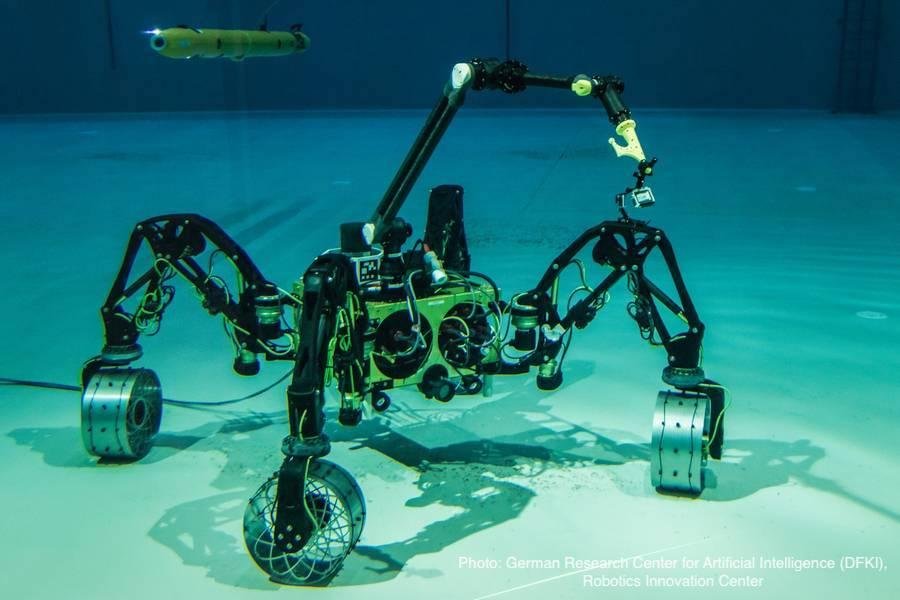

A modern Big Data Analytics Market Platform is not a single product but a complex, integrated ecosystem of technologies designed to handle the entire data lifecycle, from ingestion to insight. The architectural foundation of such a platform is typically layered to manage distinct functions efficiently. The first layer is Data Ingestion, responsible for collecting raw data from a multitude of sources, including transactional databases, weblogs, social media feeds, IoT sensors, and streaming applications. This layer uses tools like Apache Kafka, Flume, or cloud-native services to reliably capture data in both batch and real-time streams. The second layer is Data Storage, which has evolved from traditional data warehouses to more flexible architectures. Data lakes, built on technologies like Hadoop Distributed File System (HDFS) or cloud object storage (e.g., Amazon S3), have become central, allowing for the storage of vast amounts of raw data in its native format. This is often complemented by data warehouses for structured, analysis-ready data. The third and most critical layer is Data Processing and Analytics, where the raw data is transformed, cleaned, and analyzed. This layer is powered by processing engines like Apache Spark, which offers speed and versatility for batch processing, interactive queries, and machine learning.

Key Technologies and Frameworks Powering Modern Platforms

The engine room of a big data analytics platform is powered by a diverse set of open-source and commercial technologies. The Apache Hadoop ecosystem, while evolving, still provides foundational components for many on-premise and even cloud platforms, including HDFS for distributed storage and YARN for cluster resource management. However, Apache Spark has largely superseded Hadoop's MapReduce as the de facto standard for large-scale data processing due to its in-memory computing capabilities, which offer significantly faster performance. For managing and querying massive datasets, various database technologies are employed. SQL-on-Hadoop engines like Apache Hive and Impala allow analysts to use familiar SQL queries on data stored in Hadoop. NoSQL databases, such as Cassandra, MongoDB, and HBase, are essential for handling unstructured and semi-structured data at scale, offering flexibility and horizontal scalability that traditional relational databases cannot match. For real-time analytics, streaming platforms are crucial. Apache Kafka is the dominant force for building real-time data pipelines, while stream processing frameworks like Apache Flink and Spark Streaming enable the analysis of data as it arrives, powering applications from real-time dashboards to immediate fraud detection. These technologies form a powerful, albeit complex, toolkit for building robust analytics capabilities.

The Cloud-Native Revolution in Big Data Platforms

The advent of cloud computing has revolutionized the architecture and accessibility of big data analytics platforms. Cloud hyperscalers like AWS, Microsoft Azure, and Google Cloud have abstracted away much of the complexity of building and managing big data infrastructure. They offer a suite of managed services that cover every layer of the analytics pipeline. For storage, services like Amazon S3, Azure Data Lake Storage, and Google Cloud Storage provide virtually limitless, highly durable, and cost-effective object storage that forms the basis of cloud data lakes. For processing, managed Spark services like AWS EMR, Azure Databricks, and Google Cloud Dataproc allow users to spin up and scale powerful processing clusters in minutes, paying only for the resources they use. The most disruptive innovation has been the rise of serverless, cloud-native data warehouses like Google BigQuery, Amazon Redshift Spectrum, and Snowflake. These platforms separate storage and compute resources, allowing for independent scaling and enabling massive parallel querying capabilities. This serverless approach eliminates the need for infrastructure management, allowing data teams to focus entirely on analysis and insight generation. The cloud has not only lowered the barrier to entry but has also become the primary hub for innovation in the big data analytics space.

The Future of Platforms: Data Fabric and Data Mesh

As organizations mature in their data journey, the centralized data lake and data warehouse models are beginning to show strain, leading to the emergence of new architectural paradigms like Data Fabric and Data Mesh. A Data Fabric is an architectural approach that aims to create a unified, intelligent, and automated data management layer across a distributed data landscape. It uses metadata, knowledge graphs, and AI/ML to automate data integration, governance, and discovery, providing a seamless and consistent data experience regardless of where the data resides—be it in multiple clouds, on-premise systems, or edge devices. It aims to solve the problem of data silos without requiring all data to be physically moved to a central location. Data Mesh, on the other hand, is a more radical, decentralized organizational and architectural approach. It advocates for treating "data as a product," with domain-oriented, cross-functional teams owning their data and the pipelines to serve it. It pushes responsibility for data ownership and quality to the business domains that create and understand the data best, governed by a federated, self-service data infrastructure platform. Both these concepts represent a shift towards decentralization and aim to address the scalability bottlenecks of centralized data teams, promising a more agile, scalable, and business-aligned future for big data analytics platforms.

Discover Related Regional Reports:

France Entertainment Media Market